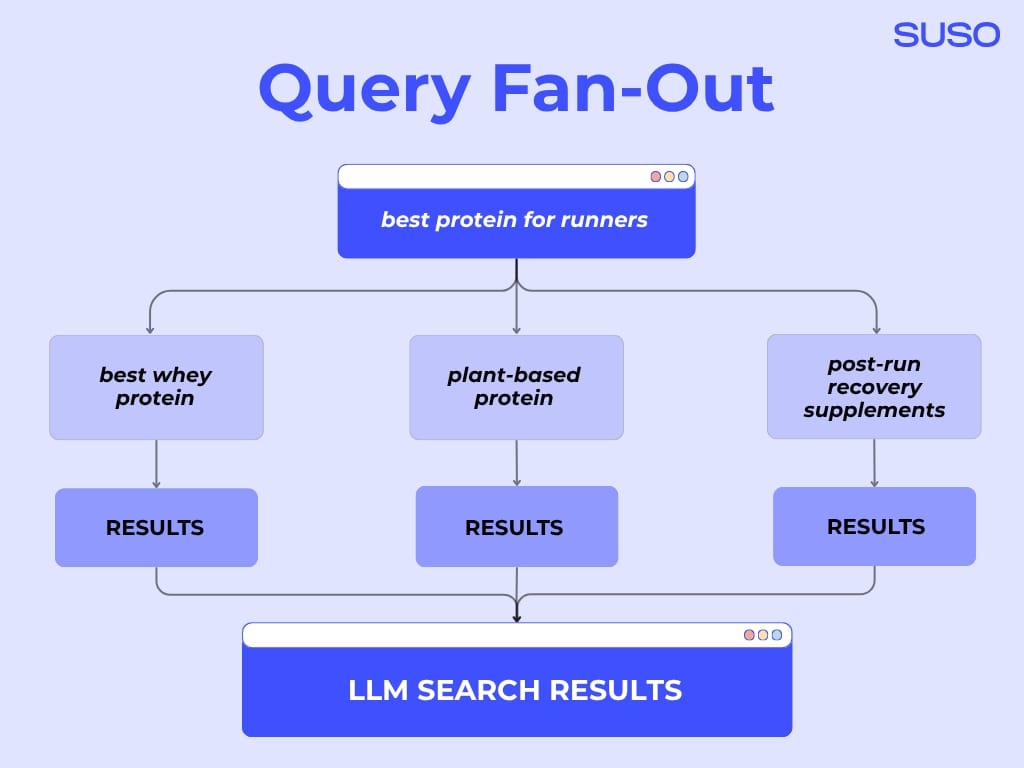

AI models use Query Fan-Out to understand true intent, expanding one search into many related sub-questions. This reasoning process goes far beyond simple keyword matching. For SEO, this means visibility in AI answers now depends on content that covers this full cluster of related topics and entities, not just the original search term.

SEOs can fan out a query for AI search optimization by using SEO tools, AI prompts and “People Also Ask”, analyzing Search Console and Google Analytics data, getting insights from sales and support teams, and through social listening.

When One Query Becomes Many

When someone asks a large language model (LLM) a question, its goal isn’t to just match the query to keywords on web pages. LLMs aim to understand and answer the user’s true intent. To do this, models from Google, OpenAI, and others go far beyond the initial query using a process called Query Fan-Out.

Query Fan-Out is a key method of AI reasoning. It takes a single query and instantly expand it into dozens of related meanings and sub-questions. The AI uses this process to retrieve and synthesize information from multiple angles, allowing it to build a comprehensive, tailored answer.

The challenge for SEOs is that while we optimize for one keyword, all major AI systems are reasoning across many. If your content doesn’t connect to those wider interpretations, your brand risks losing an opportunity to be cited and mentioned in these new AI-driven answers.

Let’s talk about Query Fan-Out, how it works, and how to optimize your content so your brand stays visible across these expanding search pathways.

What Exactly Is Query Fan-Out?

Query Fan-Out is the process where an AI or search model takes a single user query and breaks it into multiple sub-queries to explore a wider or deeper set of possible answers.

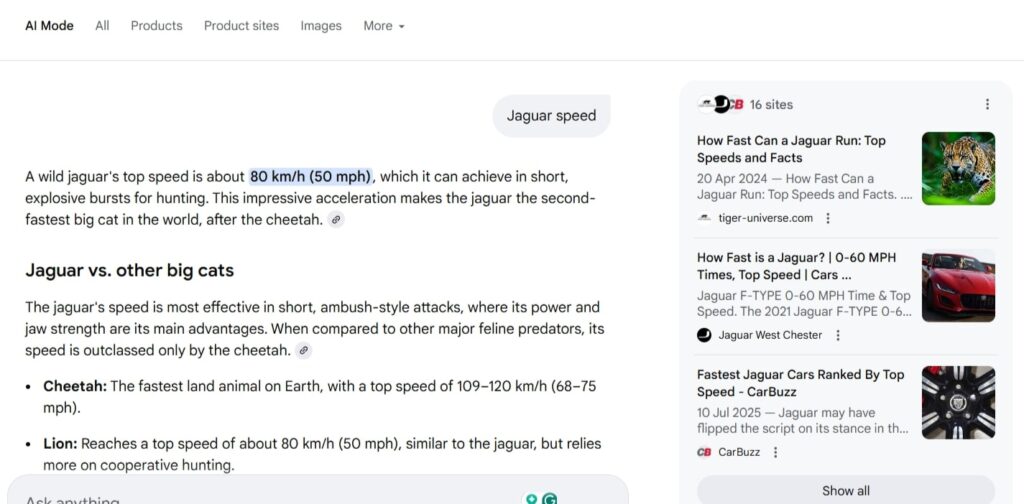

For example, if you search for “best protein for runners,” an LLM doesn’t stop there. It fans out the query into variations such as “best whey protein,” “plant-based protein,” or “post-run recovery supplements.”

Each branch helps the system retrieve a more complete and contextually accurate picture of what you might be looking for.

This underpins how AI Mode in Search works. Instead of matching keywords, AI interprets meaning, using reasoning to predict what users really want to know and pulling information from multiple sources to answer it coherently.

Inside the Black Box: How Query Fan-Out Works in Google’s AI Mode

When you type a query into an AI answer engine, the process doesn’t just stop with one search. Under the hood, here’s what’s going on, based on Google’s AI Mode example:

1. Multiple Search Calls

The system creates several sub-queries that explore different angles of your initial question.

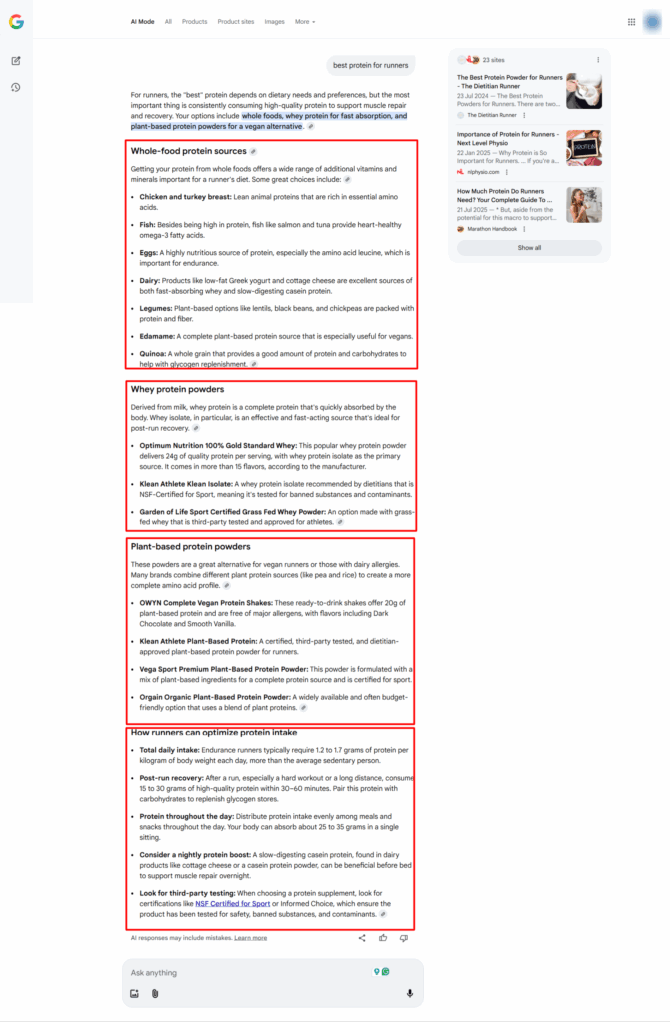

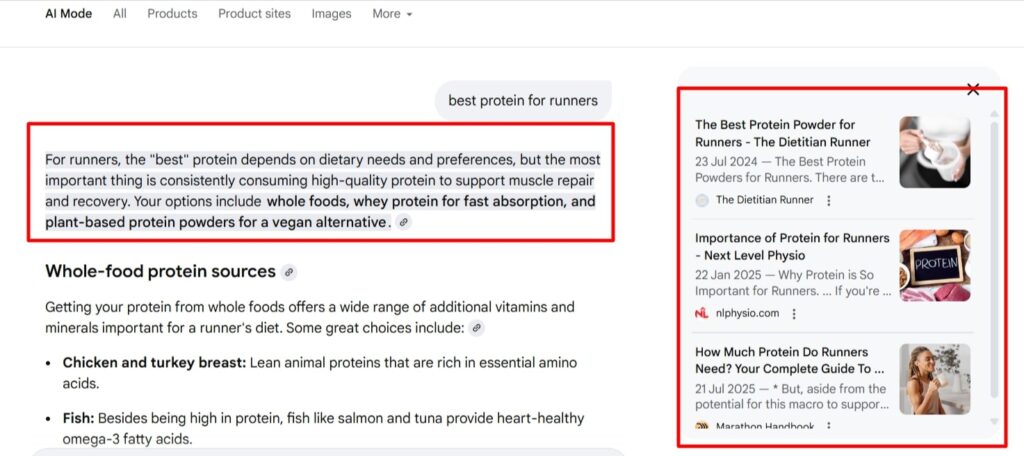

2. Result Clustering and Layered Citations

Instead of a single flat list of links, the engine groups retrieved results into clusters (by intent, source type, and entity type) and layers citations accordingly.

Example: One cluster might focus on “whole-food protein sources,” another on “whey protein powders,” and a third on “plant-based protein powders.”

3. Distinct Answer Snippets and Synthesized Overview

After retrieval and clustering, the AI generates a coherent answer overview, often at the top of the results, citing multiple sources.

4. Intent-Depth and Multi-Entity Reach

Because of the fan-out, the system reaches across entities (e.g., runners ↔ supplements ↔ recovery protocols) and intent (improve performance, recover faster, dietary restrictions).

If your content only aligns with the seed query and not these sub-branches, you risk being omitted from the clusters or not getting as many citations as you could.

Why LLMs Use Query Fan-Out in the First Place

When an AI like Google’s Gemini “fans out” your question, it’s essentially doing extra homework by checking multiple angles and sources to make sure its final answer stands on solid ground.

Tackle Ambiguity

A single query can mean many things. “Jaguar speed” could be a car spec or an animal fact. Fan-out spins up targeted sub-queries to test each meaning, then prioritizes the most likely intent.

Improve Factual Grounding and Reduce Hallucination

Rather than relying on one page, the model retrieves evidence from multiple sources for each branch of the query. This multi-query retrieval works like a cross-check.

It allows the AI to verify claims (like dates, figures, or definitions) before it writes its answer. If two or three independent documents support the same statement, the model can cite them and drop outliers, lowering the risk of confident-but-wrong answers, or hallucinations.

Diversify Perspectives

Different content types answer different needs: a clinical study, a buyer’s guide, a forum thread, a brand website. Fan-out pulls across formats and entities to balance authority with practicality.

The SEO Impact: Why Query Fan-Out Matters More Than Optimizing for Keywords

Let’s say someone searches “best CRM software for small businesses.” An AI tool might fan that out into “best free CRM tools,” “CRM with email automation,” or “HubSpot vs Zoho for small teams.” Each of those mini-queries could surface different brands and different citations.

Optimizing not just for a single query group, but for multiple related queries, presents an opportunity for SEOs. The traditional approach – picking one primary keyword group and building a page around it – now captures only a fraction of the opportunity. To stay visible in AI search, your content needs to align with multiple variations of the search intent around a topic, not just a single query.

You’re no longer optimizing for search terms; you’re optimizing for the search intent.

Optimizing for Query Fan-Out: Practical Strategies for SEOs

1. How to Fan Out a Query

If search engines are fanning out queries behind the scenes, SEOs need to do the same during research. Your goal is to uncover the full range of meanings, intents, and subtopics that stem from your target query, either on the page you’re working on, or by creating another page and cross-linking the two.

During SUSO’s recent webinar on how to measure AI visibility, our Partner Growth Manager, Cara Corbett, explained how to find questions your target audience might be asking LLMs.

Start by combining multiple data sources:

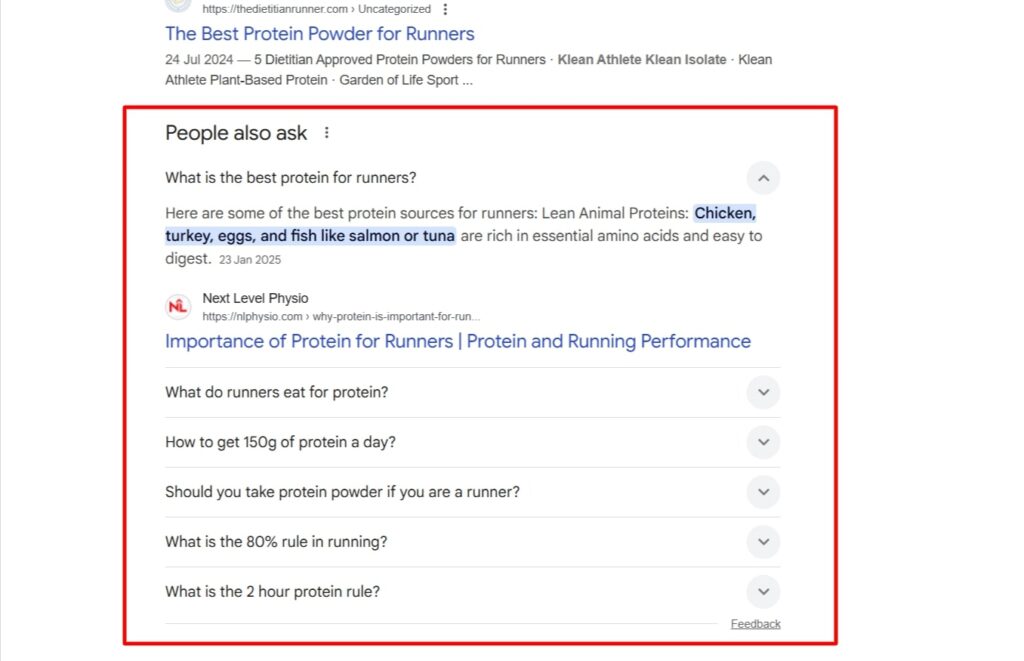

- Use specialized SEO tools (Ahrefs, Semrush, AlsoAsked) and “People Also Ask” results to map common follow-up questions.

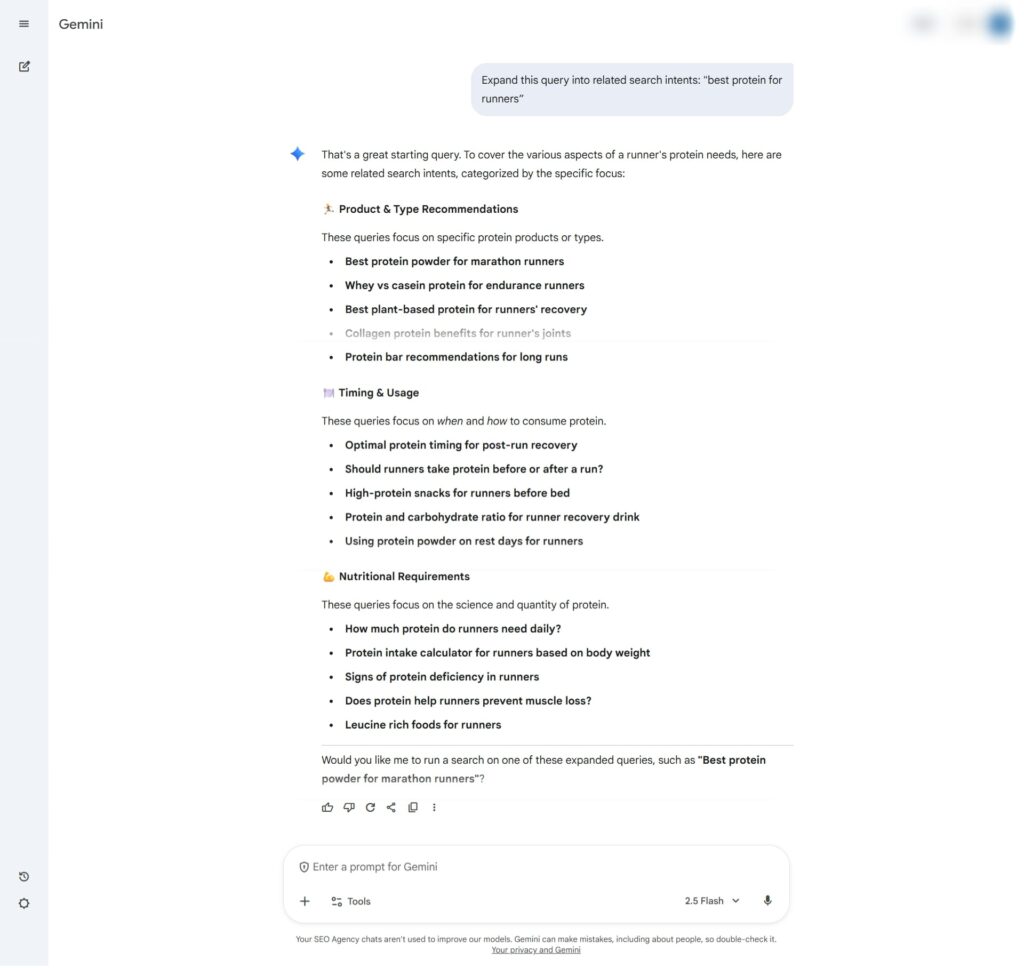

- Try prompts like “Expand this query into related search intents” in tools such as ChatGPT or Gemini to uncover how AI itself might interpret it.

Overlapping results across different tools usually signal high-value coverage areas, meaning topics that will likely appear in AI Overviews or conversational searches.

- Your own data can be just as valuable. Google Search Console can be a great tool for surfacing long-tail, question-style queries that reflect real user intent.

- If your website has a search bar or chatbot, set up tracking for those to capture the exact phrases people use.

- Ask your support or sales teams for common customer questions and concerns. These are often goldmines for intent expansion.

- Don’t ignore forums and social threads either. Platforms like Reddit and Quora reveal the authentic language people use when discussing a topic. Analyzing these conversations helps you capture natural phrasing and emotional context that AI systems often replicate when expanding user queries.

2. Turning Fan-Out Insights into Optimized Content

Once you’ve mapped your query clusters, the next step is to build content that covers them completely, not just at a surface level.

Focus on entities, not just keywords

Make sure your content clearly references related people, brands, products, and concepts that appear across the fan-out branches.

Build semantic completeness

Cover subtopics, comparisons, and question variations in subsections or FAQ blocks. Aim for each page to serve as a hub that fully satisfies multiple intents.

Structure for retrieval

Use clear headings, short factual summaries, tables, and definition-style answers so AI systems can extract information easily.

Strengthen connections

Use internal linking with contextual anchor text to tie related topics together and signal topical depth.

Observe AI behavior

Run your target queries through an AI tool of your choice. Note what follow-ups, entities, or evidence types appear. These patterns reveal how the model interprets the topic and which content formats it prefers to cite.

When you optimize like this, you help AI answer engines to recognize your content as a relevant and reliable node across multiple interpretations of a query.

Winning When Queries Multiply

In the new search landscape, success no longer comes from ranking for a single keyword but from being visible across an entire cluster of related queries and intents.

To stay ahead, think beyond words on a page. Optimizing for AI Search requires focusing on entities, semantic completeness, and trust signals that help AI systems recognize your brand as an authoritative, reliable source.

When queries multiply, the winners are those who’ve built content ecosystems that reflect how people (and now machines) truly search and learn.